Most AI video tools can generate something impressive.

Very few can generate something usable.

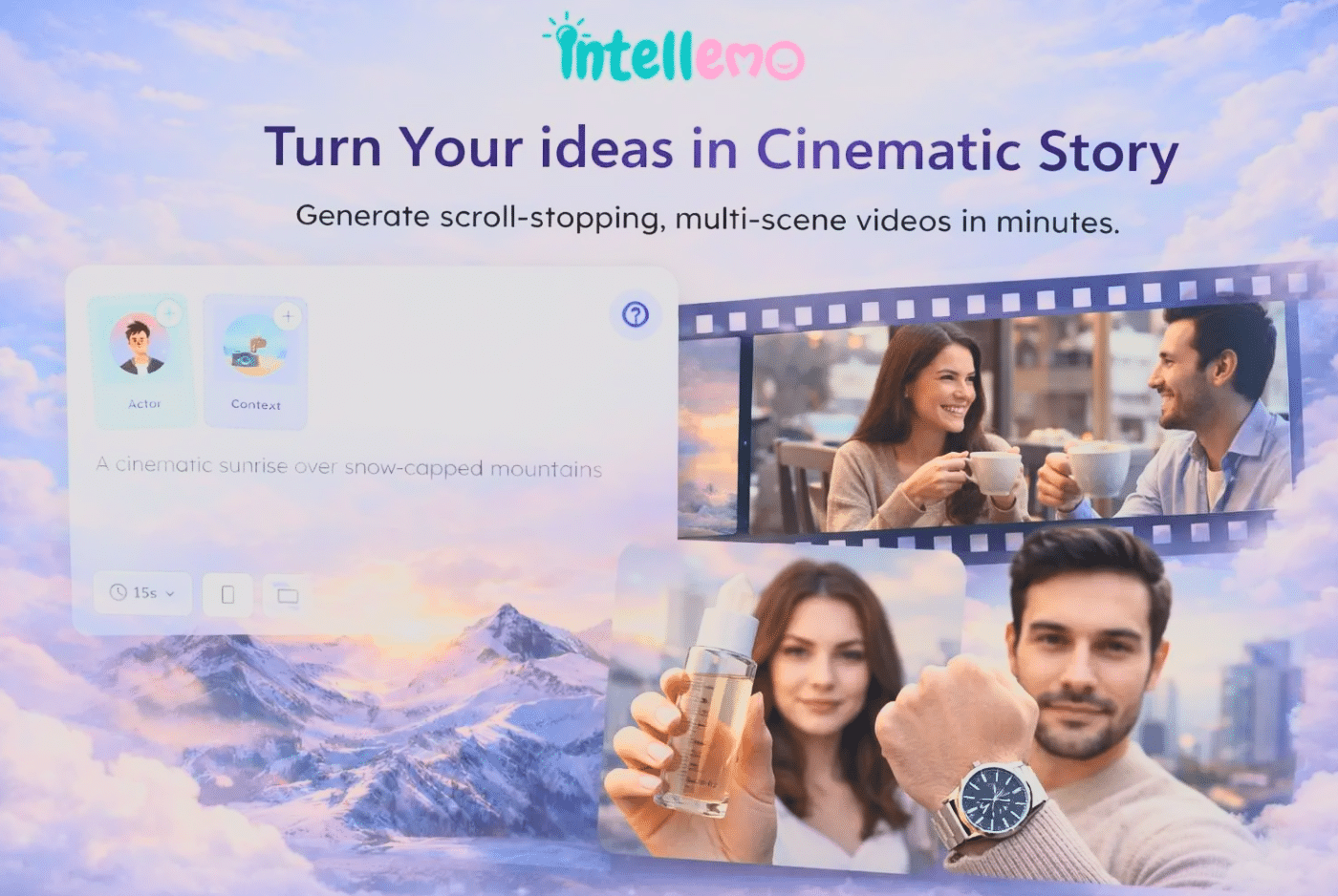

That difference has quietly defined the entire category. For a long time, AI video has been judged by how good a single frame looks. In practice, what really matters is whether a video can stay consistent long enough to be used in a real workflow, something that a new generation of structured, workflow-driven video systems (like this) is starting to address.

That is where most systems have struggled and where the biggest shift is now happening.

Contents

- The real problem was never generation

- Why continuity became the hardest problem

- Lip sync is where realism breaks first

- Prompting is a structure problem, not a creativity problem

- Model selection shouldn’t be part of the workflow

- Speed only matters when the output holds

- From generating clips to generating complete sequences

- What a reliable video generation system looks like

- The shift from output quality to output reliability

- Where this leads

The real problem was never generation

Generating visuals is no longer difficult.

Modern models can produce high-quality frames, realistic faces, and convincing motion in short bursts. The problem starts when those outputs need to go beyond a few seconds.

Cinematic video is not just a collection of moments. It is a continuous sequence.

That sequence depends on stability:

- The same character across scenes

- The same environment over time

- Natural transitions between shots

- Audio that matches visual motion

Most tools break under this requirement.

A face changes slightly between cuts.

Lip sync goes out of timing.

Lighting resets between scenes.

Individually, these issues seem small. Together, they make the video unusable.

Why continuity became the hardest problem

Continuity is not a visual problem. It is a systems problem.

Each scene in a video depends on the previous one. That means the system needs to maintain context over time about what the character looks like, how they move, what the environment feels like, and how the story progresses.

Most generation pipelines are not built for this. They treat scenes as separate outputs instead of connected states.

That works for short clips. It fails for longer videos.

This is why long-form AI video has fallen short of expectations. The limitation is not creativity or model capability. It is the inability to keep consistency across the entire sequence.

Lip sync is where realism breaks first

If continuity is the backbone of video, lip sync is where realism is tested.

People are highly sensitive to speech alignment. Even a small mismatch between audio and mouth movement is enough to break immersion. It does not matter how realistic the visuals are if the lip sync is off, the output feels artificial.

Fixing this is not just about syncing audio to frames. It also requires:

- Consistent facial structure

- Accurate phoneme mapping

- Stable motion across frames

Most tools approximate this. Very few maintain it across longer sequences.

That is why lip sync has remained one of the clearest signals of whether a system is ready for production.

Prompting is a structure problem, not a creativity problem

Another reason video generation feels inconsistent is how prompts are used.

They are often treated as creative inputs:

write something → generate → adjust → repeat

That approach works for exploration. It does not work for reliability.

In a video system, a prompt acts more like a structural input. It defines:

- Scene order

- Character behavior

- Tone and pacing

- What must remain consistent

When that structure is missing, the system starts improvising. That is when drift happens characters change, scenes lose coherence, and outputs become unpredictable.

Model selection shouldn’t be part of the workflow

One of the least discussed inefficiencies in AI video is model choice.

Users are often expected to decide:

- Which model to use for video

- Which one to use for audio

- Which version balances quality and cost

This adds friction at every step. It also leads to unnecessary experimentation and wasted credits.

In practice, this is not where users should be spending their time.

More effective systems treat model selection as an internal decision. They route each part of the workflow through the most suitable model without exposing that complexity.

This does two things:

- Reduces cognitive load

- Improves output consistency

It also allows the system to improve without requiring users to constantly adapt.

Speed only matters when the output holds

Fast generation has become a baseline expectation.

But speed without stability creates more work.

If a video needs multiple correction cycles fixing faces, adjusting lip sync, reworking transitions the initial speed advantage disappears. The process becomes slower overall.

What actually matters is how quickly a system can produce something that does not need fixing.

That depends on:

- Continuity across scenes

- Lip sync accuracy

- Minimal distortion

- Coherent transitions

When those are stable, speed becomes meaningful. When they are not, speed is just a number.

From generating clips to generating complete sequences

The category is now moving from clip generation to sequence generation.

That shift changes what users expect.

Instead of asking:

“Can this tool generate a good video?”

The better question is:

“Can this tool generate a complete, consistent sequence that can be used without rebuilding it?”

That requires:

- Multi-scene continuity

- Stable character identity

- Reliable audio-visual alignment

- Controlled variation across shots

These are not isolated features. They are system-level capabilities.

Platforms that treat them separately tend to struggle. Platforms that treat them as a single workflow start to produce results that feel closer to finished content.

What a reliable video generation system looks like

It becomes easier to evaluate a platform when the problem is defined correctly.

A reliable system should:

- Maintain character consistency across scenes

- Preserve lip sync without manual fixes

- Handle transitions without breaking continuity

- Reduce the need for repeated prompting

- Remove the burden of model selection

If these are in place, video generation becomes predictable.

If they are not, the workflow remains experimental.

At this stage, platforms like Intellemo AI are being built specifically around this idea, treating video generation as a complete system instead of a set of separate tools. By focusing on continuity, structured prompting, and automatic model orchestration, they are moving closer to making AI video actually usable in production workflows.

The shift from output quality to output reliability

The most important change in AI video is subtle.

The focus is moving away from:

“How good does it look?”

Toward:

“Can it be used without breaking?”

That shift matters because real-world use depends on reliability, not just quality.

A visually impressive clip is easy to generate. A consistent, long-form video that holds together from start to finish is much harder.

As systems begin to solve that problem, video generation starts to move out of experimentation and into actual production.

Where this leads

Once video becomes reliable, everything around it changes.

Longer formats become practical

Multiple variations become easier

Content becomes more reusable

At that point, video is no longer a bottleneck.

It becomes a base layer.

And when that happens, the impact extends beyond video itself. It affects how ideas are expressed, how content is reused, and how consistently output can be produced.

That is the shift that is now starting to take shape.