The logic seems airtight: generate more outputs faster, and you’ll land on a usable one sooner. For indie makers and prompt-first creators working with AI visuals, this is the dominant mental model. Generate twenty variants in five minutes, pick the best one, move on. But after watching this play out across dozens of projects, I’ve started to question whether “fast” actually saves time—or just hides the rework until later.

Contents

The Illusion of Fast Iteration

The trap is easy to fall into. You type a prompt, hit generate, and within seconds you have a video clip or an image. It’s not quite right, so you adjust one word and generate again. Repeat this ten times, and you’ve burned twenty minutes reviewing, rejecting, and re-prompting. Each failed generation costs not just compute credits but creative momentum. The mental overhead of deciding “close but not usable” fifteen times in a row is real, and it drains more energy than most creators account for.

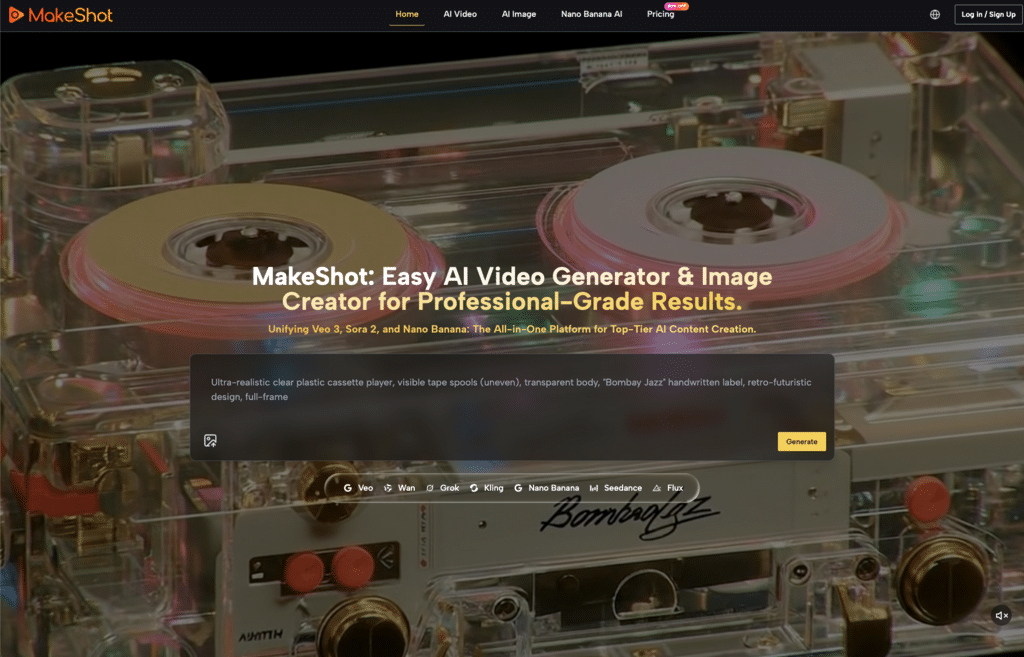

What often gets missed is that tools like MakeShot’s AI Video Generator offer parameters that let you refine outputs before rendering. Aspect ratio, style reference, seed locking—these controls exist precisely to reduce guesswork. But in practice, many users skip them entirely in favor of raw speed. The result: a pile of near-miss outputs that each require individual attention, rather than one deliberate output that lands cleanly.

Where Control Breaks Down in Practice

Weak prompt engineering is the first domino. Rushed prompts tend to be vague—“a cat walking through a neon city” leaves the model to fill in lighting, camera angle, motion type, and atmosphere on its own. The model guesses, and what comes back is unpredictable. You regenerate. The model guesses again, differently. This loop is the hidden cost of speed-first workflows.

Beyond prompts, creators often overlook structural parameters that enforce consistency. Generating five images at 16:9 followed by three at 4:3 without noticing the mismatch creates a jarring visual inconsistency across a batch. Seed values left to random default mean no two outputs share a coherent base, even if the prompt is identical. These aren’t failures of the model—they’re workflow gaps that get papered over when you’re moving fast.

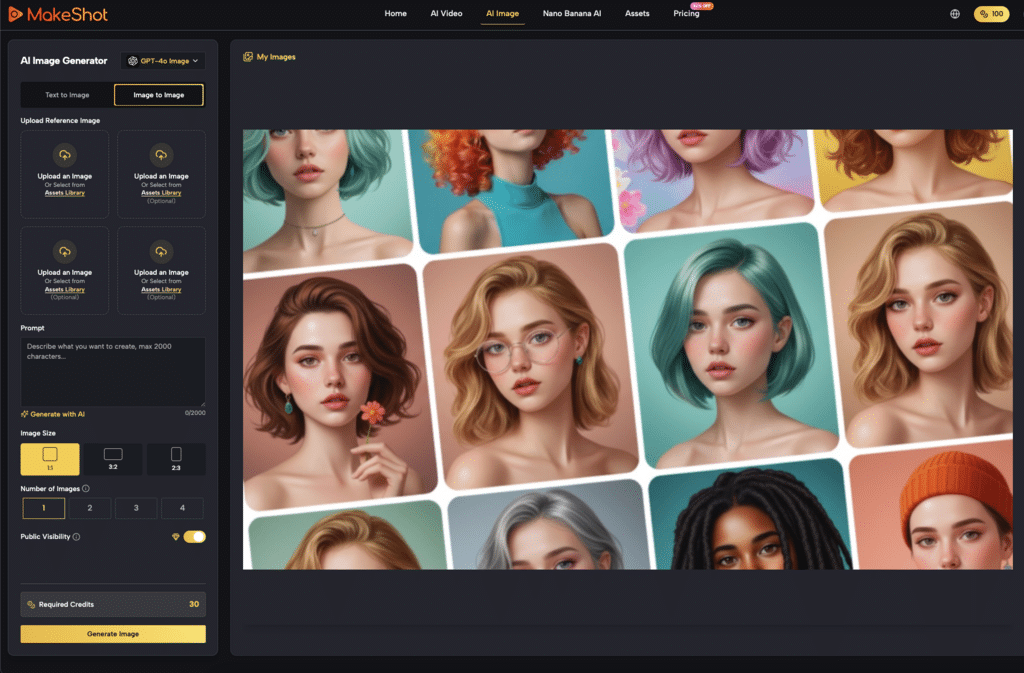

Platforms like Nano Banana AI provide structured controls that address this directly. Image size, generation count, and public visibility settings are surfaced as options rather than buried in a menu. But the tool only helps if you actually engage with those settings. Skipping them to save ten seconds often costs ten minutes of curation later.

Three Adjustments That Reclaim Both Speed and Control

The counterintuitive truth is that slowing down at the setup stage makes the entire pipeline faster. Here are three shifts that work in practice.

First, lock your parameters into a template before you generate anything. Seed, style preset, negative prompt, aspect ratio—set them once and reuse them across the batch. This prevents visual drift between iterations and means the only variable you’re testing is the prompt itself. It’s one extra minute of setup that eliminates entire rounds of reshooting.

Second, validate composition before committing to full renders. On Nano Banana AI, you can generate a low-resolution preview or a single test image to check framing, lighting, and subject placement. If the composition doesn’t work, there’s no point generating four high-res variants of a bad idea. Approve the skeleton first, then scale up. The restyle and edit features in Nano Banana AI are well-suited to this workflow—you can fix a problem in the preview rather than trashing a batch of full renders.

Third, build in a deliberate pause between batch generations. Even a thirty-second buffer to review outputs before hitting “generate again” cuts wasteful re-rolling by a noticeable margin. It sounds trivial, but the habit of immediate regeneration without assessment is what creates those long, exhausting loops. A quick scan catches obvious issues—wrong subject, bad crop, garbled text—before you compound them.

What We Still Can’t Rush: The Limits of AI Predictability

Even with disciplined workflows, AI generation retains a degree of unpredictability that no amount of setup eliminates. Subtle artifacts, semantic misses, or stylistic quirks slip through. There is, as far as I can tell, no reliable automated quality gate for catching these yet. Human review remains the final filter, and that takes time.

It’s also worth noting that today’s optimized workflow may not hold up next month. Model updates, new parameter options, and shifting platform features mean what worked reliably in April could produce different results in May. The safest conclusion is that control-first workflows reduce rework but don’t eliminate it. Budget for at least one manual curation pass per batch, and treat any workflow that promises zero review with healthy skepticism.

When Fast Is Actually Fine (and When It Isn’t)

None of this is to say speed has no place. For early concept exploration, low-stakes ideation, or simply stress-testing a prompt direction, rapid-fire generation is useful. The goal there is volume and breadth, not consistency. Generate widely, spot interesting directions, then move to a controlled workflow for the final output.

But for client-facing deliverables, brand assets, or content that needs to cohere into a narrative, control must take priority. Matching your workflow speed to the output’s intended use is a skill that saves more time than any single tool feature. Knowing when to go fast and when to slow down is itself the optimization that most teams overlook.

The real cost of rushed AI video workflows isn’t the compute credits wasted on bad generations. It’s the creative momentum lost to repetitive review loops, the visual inconsistency that undermines a project’s polish, and the time spent redoing work that could have been done right once. Speed is a tool, not a strategy. Use it where it fits, and put it away where it doesn’t.