The prevailing narrative around generative AI often centers on the “magic” of the prompt. We are told that if we simply find the right combination of descriptive adjectives and technical modifiers, the model will return a flawless asset on the first try. For anyone working in a professional production environment—whether you are a performance marketer testing ad creatives or a creative lead building out a brand library—this “one-shot” mentality is a myth.

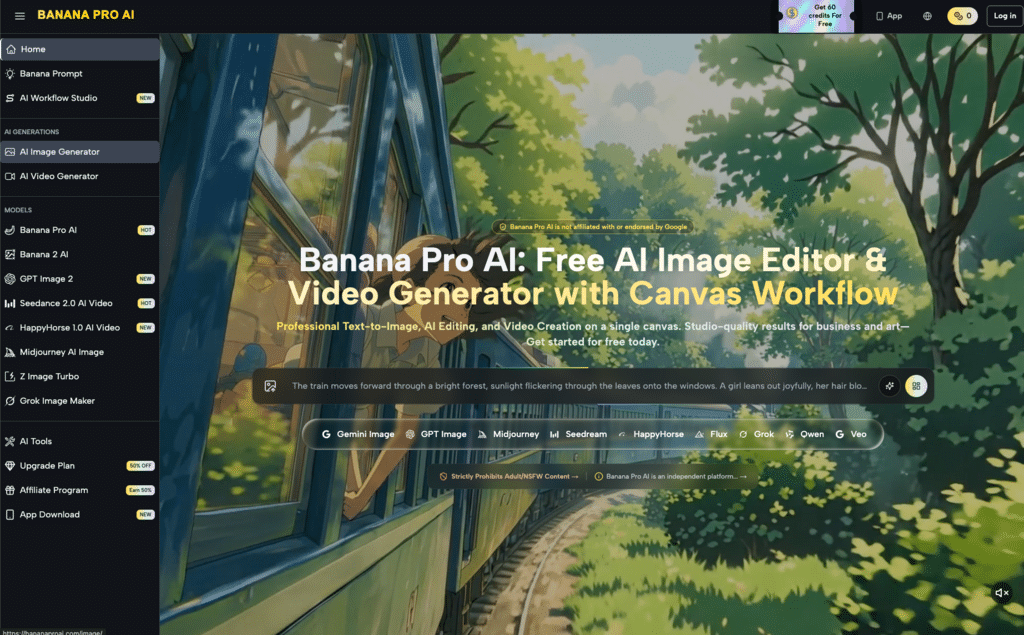

In practice, high-quality output is rarely the result of a single, perfectly phrased instruction. Instead, it is the product of an iterative stack: a combination of high-fidelity source assets, granular control via tools like Nano Banana Pro, and a rigorous feedback loop. To get the most out of systems like Banana AI, we have to move past the idea of prompting as “ordering a meal” and start seeing it as “conducting an orchestra.”

Contents

- The Limitations of Text-Only Input

- Refining the Workflow with Nano Banana Pro

- The Role of the AI Image Editor in Precision Work

- Source Assets: The Secret to Consistency

- Prompt Stewardship vs. Prompt Engineering

- Handling Uncertainty in Generative Output

- Building a Repeatable Asset Pipeline

- The Shift from Creation to Curation

The Limitations of Text-Only Input

Text is a lossy medium. When you describe a “minimalist glass bottle with soft morning light,” the model has to interpret what “minimalist” means in the context of thousands of different training images. It has to guess the angle of the light and the refraction index of the glass. While modern models are incredibly capable, they cannot read your mind.

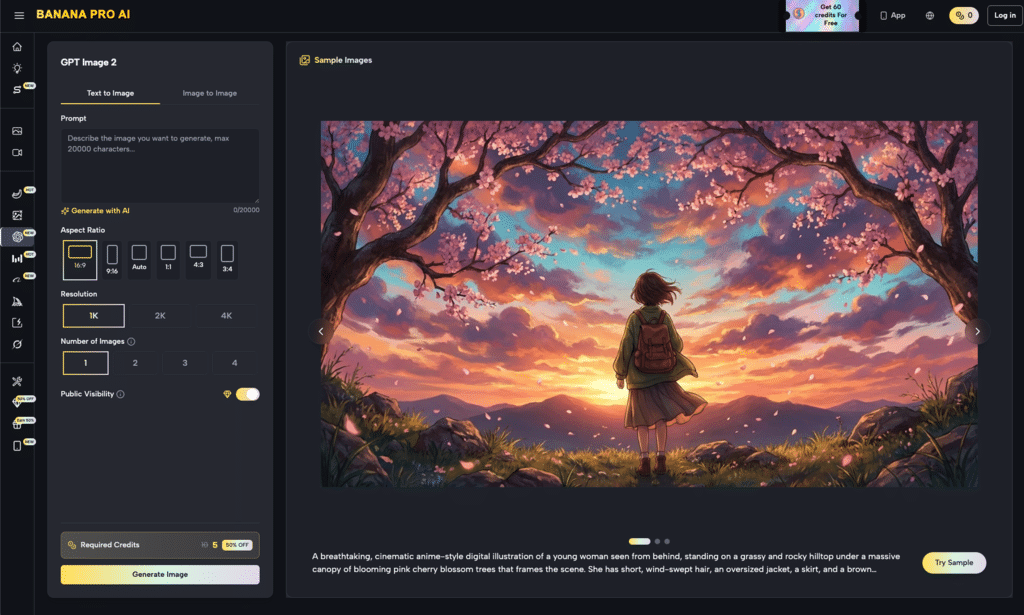

This is where the reliance on text-only prompts often fails. You might spend an hour tweaking words, only to find the composition is consistently off-centered or the color palette is too saturated. Professional workflows solve this by introducing source assets early in the process. By using an image-to-image workflow, you provide the model with a structural foundation—a “map” of where pixels should live—reducing the burden on the text prompt to define every single coordinate.

Refining the Workflow with Nano Banana Pro

When precision is the goal, the model choice dictates the ceiling of your output. In the current ecosystem, Nano Banana Pro has emerged as a preferred choice for creators who need more than just a generic stylistic approximation. Its architecture is designed to handle complex instructions while maintaining a high level of photorealism and textural detail.

However, even with a powerful engine like Nano Banana, the first generation is usually just a “sketch.” The real work begins in the iteration. This involves looking at the output, identifying where the model deviated from the intent, and deciding whether to adjust the prompt weight or the denoising strength.

It is important to acknowledge a technical reality here: no model, including Nano Banana, is currently perfect at handling high-density text within an image or complex anatomical overlaps (like interlocking hands) 100% of the time. There is a persistent level of unpredictability in how latent space is sampled, meaning that “perfect” is a moving target. Professional creators accept this variance and use it as a starting point rather than a final result.

The Role of the AI Image Editor in Precision Work

Once you have a base image that is 80% of the way there, the most common mistake is to “re-roll” the entire prompt. This is a massive waste of time and computational resources. Instead, a targeted approach using an AI Image Editor allows for regional adjustments that preserve the parts of the image that are already working.

Using an editor for inpainting or localized refinement is the difference between an amateur and a pro. If the lighting on a product is perfect but the background is too cluttered, you don’t start over. You mask the background and iterate on that specific area. This surgical precision is what allows Banana Pro users to scale their asset production without sacrificing the unique brand markers that make an image valuable.

Source Assets: The Secret to Consistency

If you are building a campaign, consistency is more important than individual image quality. If Image A looks like a film photograph and Image B looks like a 3D render, the campaign fails.

The most effective way to ensure consistency across a project is to use a consistent source asset as a reference. This could be a mood board, a specific color palette, or a rough layout created in a traditional design tool. When you feed these into the Banana Pro system, you are giving the AI a narrow “lane” to operate in.

We must be realistic about the limitations of “style transfer.” While you can tell a model to “make this look like 1970s Kodachrome,” the results will vary based on the original image’s contrast and noise levels. It is often more effective to get the composition right first, and then use a secondary pass to apply the stylistic finish. This multi-step process is the core of the “Iterative Stack.”

Prompt Stewardship vs. Prompt Engineering

The term “prompt engineering” implies a level of mathematical certainty that doesn’t quite exist in generative media. A more accurate term might be “prompt stewardship.” This involves managing the evolution of a prompt over five, ten, or twenty iterations.

When working with Nano Banana, your prompt should evolve based on the visual evidence. If the model is ignoring a specific keyword, don’t just repeat the word; change the context. Instead of “blue background,” try “studio backdrop, solid cerulean, soft focus.”

The Anatomy of a High-Precision Prompt

- Subject Core: The “what” of the image. (e.g., A brushed aluminum laptop).

- Environmental Context: The “where.” (e.g., On a white marble desk, minimalist office).

- Technical Specs: The “how.” (e.g., 85mm lens, f/1.8, cinematic lighting).

- Negative Constraints: What to avoid. (e.g., No clutter, no reflections, no humans).

By breaking the prompt down this way, you can isolate variables. If the lighting is wrong, you only change the “Technical Specs” section. This scientific approach to iteration is what separates a lucky “roll” from a repeatable professional process.

Handling Uncertainty in Generative Output

Even with the best tools, there are moments of friction. One significant limitation currently facing the industry is “semantic drift.” This happens when a prompt becomes so long and complex that the model begins to prioritize the end of the sentence over the beginning, or vice versa.

When you encounter this in the Banana AI ecosystem, the best move is often to simplify. Strip the prompt back to its core, get a solid base image, and then use the editor to add the complexity back in layers. Expecting the model to solve a 20-variable equation in one go is a recipe for frustration.

Another area of uncertainty is color accuracy. If a brand requires a specific Pantone shade, generative models often struggle to hit that exact hex code due to the way lighting and shadows are calculated. In these cases, the expectation should be “close enough for a base,” with the final color correction happening in post-production.

Building a Repeatable Asset Pipeline

For content teams, the goal isn’t just one great image—it’s a hundred. Building a pipeline around these tools involves creating a library of “anchor prompts” and “source templates.”

- Phase 1: The Anchor. Use a high-quality model like Nano Banana to establish the visual language of the project.

- Phase 2: The Variation. Use image-to-image workflows to create different angles or scenarios while keeping the subject consistent.

- Phase 3: The Polish. Use the editor to fix minor artifacts, adjust lighting, or swap out elements for specific regional markets.

This workflow treats the AI as a collaborator in a production line rather than a solitary artist. It acknowledges that while the AI can do the heavy lifting of rendering and texture, the human operator provides the strategic oversight and the final quality control.

The Shift from Creation to Curation

As the technology behind Nano Banana Pro and similar engines continues to advance, the bottleneck for creators is no longer the ability to generate an image—it is the ability to choose the right image and refine it to a professional standard.

The “Iterative Stack” is a philosophy of work that favors patience and precision over speed. It recognizes that the prompt is just the beginning. By leveraging source assets, specialized models, and surgical editing tools, creators can move away from the lottery of random generation and toward a disciplined, professionalized creative process.

The future of AI media isn’t about the model that can generate the best image from a single word; it’s about the platform that gives the creator the most control over the evolution of that image. In that transition from “generation” to “iteration,” the real value of the modern AI toolkit is finally realized.